As enterprises race to leverage AI for competitive advantage, the complexity and scope of associated risks continue to grow. Anticipating and preparing for these risks is crucial for any organization aiming to harness the full potential of AI technologies. To provide much-needed guidance in this transformation, we published “The Road to Enterprise-Ready AI”, a comprehensive report outlining the key risks and emerging solutions in the rapidly changing AI landscape.

This report combines the expertise of both our CISO and CDAO villages, bringing together best practices from cybersecurity, privacy, compliance, fairness and robustness. With this in mind, we have not only outlined tips and predictions for security and data groups, but also built a map of what the future of innovation in this space looks like.

As the market is starting to respond with cutting-edge solutions to new, AI-driven problems, it is crucial for enterprise technology leaders to respond quickly. Laggards may find themselves at a much higher risk of exposure. To quote Christoph Peylo, Chief Cybersecurity Officer at Bosch, “The companies that will learn to do this well, and learn to do this now- not tomorrow – these will emerge as industry leaders, setting the benchmark as AI transforms the global business and technology landscape.”

Here are the main takeaways from the report.

The Stakes and Challenges: Understanding the Top 6 AI Risks

- Data Privacy

AI models require vast amounts of data for training and operation, often involving sensitive or regulated information. Ensuring data is anonymized and compliant with regulations such as GDPR and HIPAA is crucial. Proper management of training platforms and enforcement of robust access controls are essential to prevent data breaches. - Adversarial Threats

AI systems are susceptible to various malicious attacks, including prompt injection, data poisoning, model extraction, and evasion attacks. These threats can manipulate AI models, leading to skewed results or unauthorized access, making comprehensive security measures imperative. - Compliance and Governance

Navigating the regulatory landscape is a significant challenge for AI adoption. Non-compliance with frameworks like the EU AI Act can result in severe penalties. Establishing strong governance practices to ensure AI models are used ethically and legally is crucial. - Bias and Fairness

AI models can inadvertently amplify biases present in their training data, leading to unfair outcomes. Enterprises must implement techniques to identify and mitigate bias, such as diverse training data and fairness constraints, to avoid legal and reputational risks. - Content Liability

AI-generated content poses risks of intellectual property infringement, inappropriate or unsafe content generation, and inaccuracies. Rigorous testing and monitoring are required to ensure content generated by AI is accurate, safe, and compliant with relevant laws. - Explainability

The complexity of AI models often makes their decision-making processes opaque. Ensuring explainability is crucial for building trust and meeting regulatory requirements. Techniques like SHAP and LIME help create more transparent models, enhancing accountability and user confidence.

AI Security: Team8’s Strategic Approach

Both security and engineering teams are currently struggling with the optimal way to secure AI technologies. The growing penetration of AI into professional environments demands a comprehensive security approach equipped with the right tooling to address both the consumption of 3rd party AI tools, and the creation of AI models (including fine-tuning) or AI-based applications.

We believe that a truly robust security strategy should involve the following 4 aspects:

Solutions for AI Adoption are Emerging: Team8’s AI Infrastructure Map

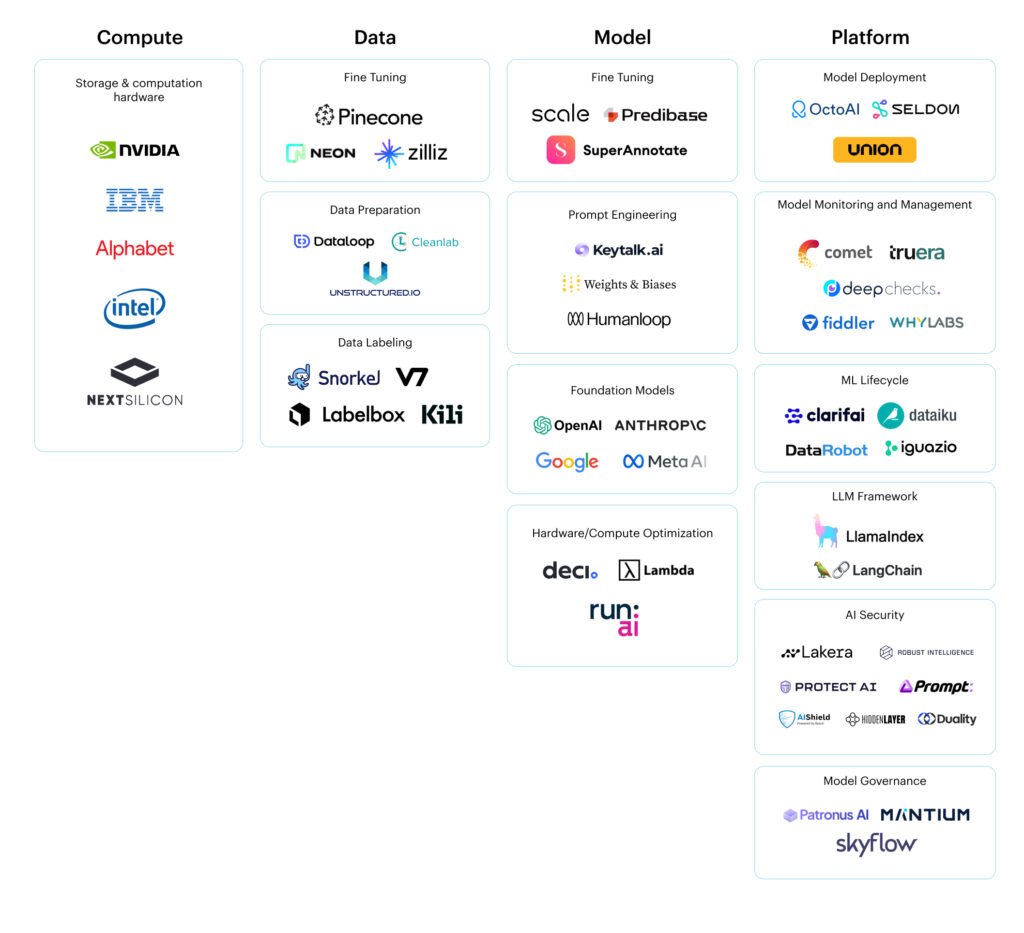

Over the past year, alongside the development of foundation models, we have witnessed extensive growth of infrastructure products aiming to help companies build and deploy AI models. This growth warranted a new landscape, and we’re excited to release our AI infrastructure Map alongside the Data Ecosystem Map. The solutions included in this map will eventually become the new AI technology stack, which we divide into four layers: Compute, Data, Model, and Platform.